Intercultural digital ethics: Understanding different points of view

- Posted on March 16, 2022

- Estimated reading time 4 minutes

In our previous post on intercultural digital ethics, we established the need for incorporating viewpoints from a wide variety of ethical frameworks; here we continue the conversation with practical ways to look at viewpoints from different cultures when making digital ethics decisions. While there are many ways to do this, we’re sharing our approach as one method you might consider applying yourself, as most of us work for companies that interact with and affect people in other parts of the world.

Identifying relevant stakeholder groups

Our first step toward understanding different cultural points of view was to select relevant value frameworks from around the world for our research. We selected six: Ubuntu, Islamic, Confucianism, Western, Shinto, and Buddhism. These selections were contingent on the availability of source material explaining their ethical values, a desire for regional variation, and admittedly limited time and resources. We recognize that a person could spend years or decades trying to fully grasp the ethical principles of any one of these systems, and there are far more systems in the world that we would have liked to have included. However, we set our parameters narrowly to give us time to develop a workable approach and process.

You will likely choose a different set for your own purposes, based on where and with whom you do business. It’s important to note at this point that it may be infeasible to reflect 100% of the stakeholders who will potentially engage with your organization; the key is to lay a foundation for considering different points of view, which you may expand upon later.

Understanding various perspectives

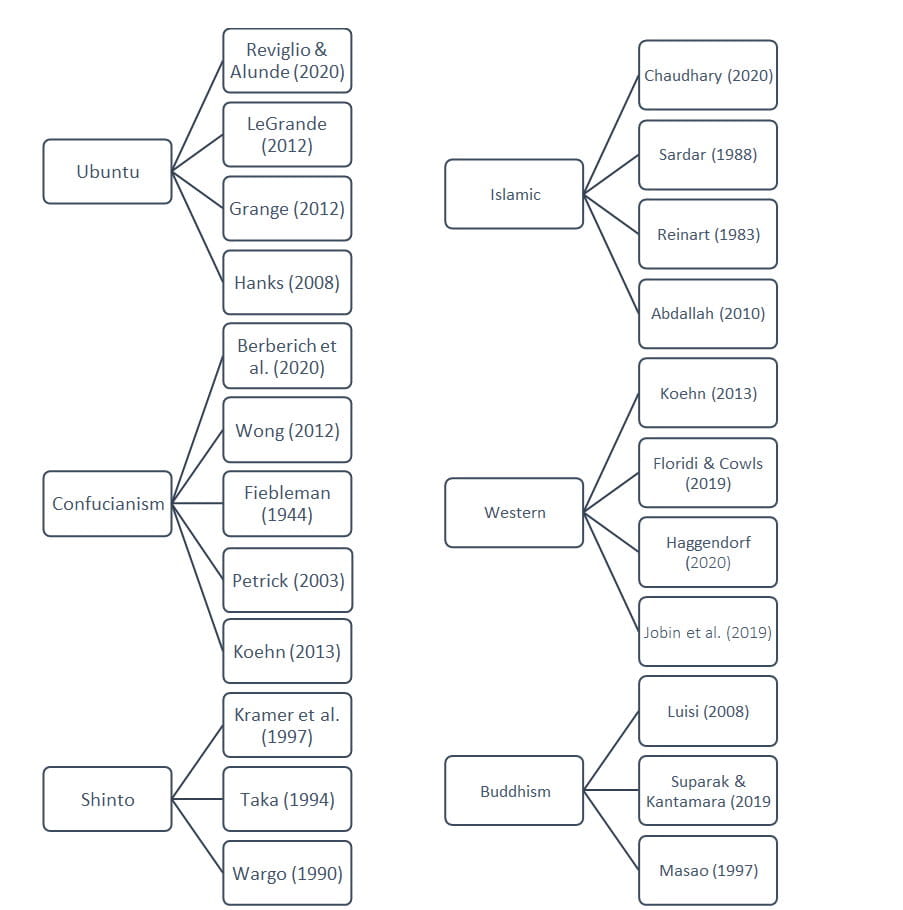

After identifying these six frames of reference, we identified primary sources to explain how each one conceptualized their values, ethics, and beliefs. We conducted a formal literature review of 25 sources from multiple fields of study (technology, philosophy, psychology, etc.) giving each value system equal weighting to avoid centering our findings. (This is, we weren’t comparing them all to a single, “established” value system.)

Categorizing values

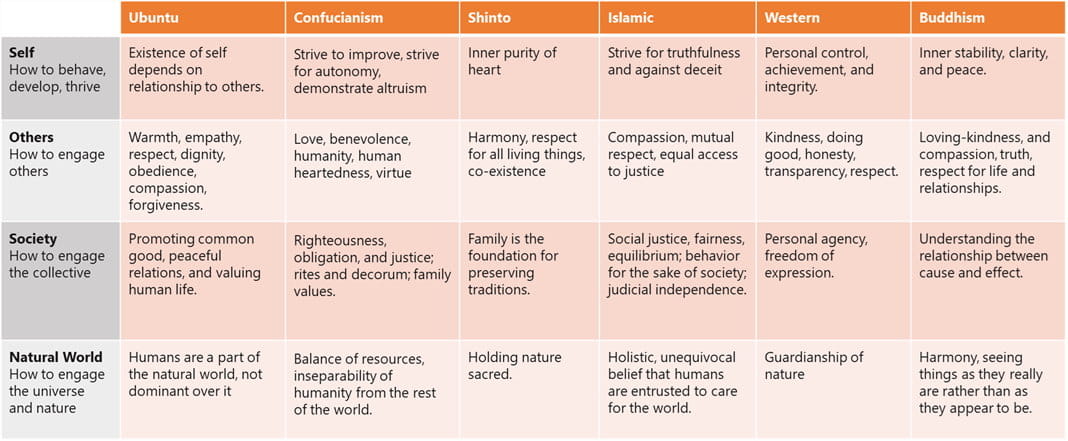

The value systems we selected clearly share common themes (respect, justice, truth, responsibility, etc.) but as expected, it was impossible to find complete agreement or even clear conflict on any single issue. Instead, to help us identify points of similarity, difference, and emphasis, we decided to categorize each system’s value statements into four domains:

- Self: How an individual should behave, develop, and thrive

- Others: How an individual should engage with another individual

- Society: How an individual should engage with groups of other people

- Natural world: How an individual should engage with the universe and (non-human) natural world around them

It’s important to note that we generated this matrix with an understanding of each value system based on the cited literature’s interpretations. We mean this to be a demonstration of how business and technology leaders can go about understanding their various constituents before settling on a particular tech policy or decision with ethical implications. This is in no way meant to be an absolute or authoritative reflection of the values of these cultures, which we know are extremely nuanced and can vary between communities within each broad group. The value of this process is in creating dialog and working toward a mutually satisfactory outcome whenever possible. We recommend involving stakeholders representing the various groups who may be affected by your technology initiative, as they’ll likely understand important nuances better than you.

Using a matrix of values

Perhaps not coincidentally, Avanade’s digital ethics assessment framework also considers how technology affects individuals, society, and the environmental. Our approach has always been to identify the potential positive and negative ethical impacts of whatever technology we’re assessing, then apply controls to make sure each impact reflects our intended values, with ethically positive outcomes whenever possible. Using our research on value systems from around the world, we may now incorporate a wider range of perspectives and objectives into our technology controls.

Again, it won’t always be possible to come up with a consensus that appeases every individual and value system, but the process will expose potential areas of concern, potential points of compromise, and even innovations that you would not have considered without diverse sources of input.

In the next part of our series, we address how to use these findings, using several vignettes as practice cases.

As always, we look forward to your input, and if you’re interested in a more in-depth discussion or help on this topic, you can contact us directly or post a comment below.

Thanks for subscribing. Watch your inbox for blog alerts and updates.

Thanks for subscribing. Watch your inbox for blog alerts and updates.

Comments